TL;DR:

- Nearly one in four Americans has used AI tools for health information, primarily to prepare for or follow up on clinician visits. AI enhances wellness by providing accessible symptom checking, personalized advice, and care navigation, supporting human practitioners rather than replacing them. Responsible AI use relies on transparency, clinical oversight, and collaboration to build trust and improve personalized health journeys.

Nearly one in four Americans has already used an AI tool for health information or advice, and most use it to prepare for or follow up on clinician visits rather than skip them entirely. That single statistic captures something important about the role of AI in wellness platforms today: it's not here to replace your doctor, your acupuncturist, or your integrative health practitioner. It's here to help you show up better informed, feel more supported between appointments, and find the right care faster. This guide walks you through exactly how AI is reshaping wellness, where it genuinely helps, and where it still needs a human hand.

Table of Contents

- Understanding AI's expanding role in wellness platforms

- The benefits and evidence supporting AI in digital mental health

- Navigating risks and ethical considerations in AI wellness solutions

- How AI enhances personalization and practitioner recommendations

- Leading successful AI integration: trust, transparency, and teamwork

- Why human-AI collaboration is the cornerstone, not replacement, in wellness platforms

- Explore AI-powered holistic health solutions with Go Holistic

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI complements human care | Artificial intelligence primarily supports and enhances traditional wellness by providing personalized information and preparation for clinical visits. |

| Mental health benefits | AI-powered chatbots and tools improve accessibility and monitoring but require supervision to ensure ethical use. |

| Risks require safeguards | Potential risks like misdiagnosis and privacy concerns make responsible deployment and human oversight essential. |

| Personalization through AI | AI helps triage and translate user data while enabling evidence-based referrals to practitioners. |

| Trust drives success | Sustainable AI wellness platform adoption hinges on transparency, trust, and meaningful human collaboration. |

Understanding AI's expanding role in wellness platforms

AI is woven into wellness platforms in more ways than most people realize. When you type a question about magnesium and sleep into a health app, or ask why a medication makes you feel foggy, you are interacting with a layer of machine learning built to interpret your language and serve back relevant, structured information. That's AI at its most accessible.

The core value here is availability. Your practitioner may not be reachable at 10 p.m., but an AI-powered platform can help you understand a lab result, clarify a supplement interaction, or identify whether your symptoms warrant an urgent appointment or a scheduled one. This is why the personalized wellness benefits of AI-informed tools have grown so quickly. They meet people where they are.

Common uses of AI in wellness platforms today include:

- Answering nutrition and exercise questions, which more than half of users report as their primary reason for engaging with AI health tools

- Physical symptom checking, helping users assess whether symptoms need immediate attention or general monitoring

- Explaining clinical information, including how medications interact, what test values mean, and how treatment plans work

- Supporting behavior change, through habit tracking, personalized reminders, and progress visualization

- Connecting users to appropriate care, by matching health concerns with relevant practitioner types and therapy modalities

Each of these functions supplements human care rather than replacing it. That distinction matters, and it shapes every responsible deployment of AI in health and wellness today.

The benefits and evidence supporting AI in digital mental health

Mental health is where the AI impact on wellness has attracted the most clinical attention, and for good reason. Access to mental health care remains unequal. Waitlists are long, costs are high, and stigma still keeps people from seeking help. AI-powered tools create a lower barrier to entry.

AI-enabled chatbots, sentiment analysis, and natural language processing (NLP, the technology that helps computers understand human speech and text) are being used to detect shifts in mood, flag worsening symptoms, and offer real-time coping support. A systematic review on AI in clinical psychology found these tools valuable as adjunct supports, meaning they work alongside therapy rather than replacing it, with notable advantages in scalability and cost. Someone who cannot afford weekly sessions may still benefit from daily check-ins with an AI-supported tool that alerts their care team if patterns worsen.

The evidence-based picture looks like this:

- Improved accessibility: AI tools are available 24/7, removing the time and location barriers that prevent many people from seeking support

- Symptom monitoring: AI can track mood entries, sleep patterns, and behavioral cues over time, creating richer data for clinicians to review

- Engagement support: Interactive AI features keep users more consistently engaged with their mental wellness goals than passive apps

- Early intervention signals: Pattern recognition in AI systems can flag changes before a crisis point, supporting timely clinical follow-up

The vital caveat: human supervision is non-negotiable. The integrative mental health benefits of combining AI tools with qualified practitioners far outweigh what either can offer alone. AI without clinical oversight is where things go wrong.

Navigating risks and ethical considerations in AI wellness solutions

Every tool that helps can also harm if misused. AI in wellness is no different. Understanding the risks is not a reason to avoid these platforms; it's a reason to choose them wisely.

The most frequently cited risks of AI in consumer health contexts include misdiagnosis, overreliance, privacy exposure, and patient anxiety. A review published in the Journal of Medical Internet Research found that responsible deployment and guardrails are just as critical as the AI's capability itself. An AI that confidently suggests the wrong answer is more dangerous than one that clearly acknowledges its limits.

Here's what responsible AI wellness platforms put in place:

- Human-in-the-loop review: A qualified clinician or trained wellness professional reviews AI outputs before they influence user decisions in high-stakes situations

- Informed consent and data transparency: Users know what data is collected, how it is used, and who has access to it

- Auditability: Platforms maintain logs that allow AI recommendations to be reviewed, questioned, and corrected

- Escalation protocols: When AI detects signals above its competence, it routes users to human care without hesitation

- Bias monitoring: AI systems are regularly checked for patterns that disadvantage particular groups based on age, gender, or background

Governance frameworks back this up. UK government guidance on AI agents specifically mandates auditability, meaningful consent, and human oversight as baseline requirements. These aren't optional extras; they are the floor for responsible use. When evaluating any AI-driven health platform, you can apply the same lens you would to natural wellness risks and safeguards: sourcing, transparency, and professional guidance matter at every level.

Pro Tip: Before trusting an AI wellness tool with your health data, ask two questions: Can I talk to a human if I need to? And does this platform explain how it makes its recommendations? If the answer to either is no, look elsewhere.

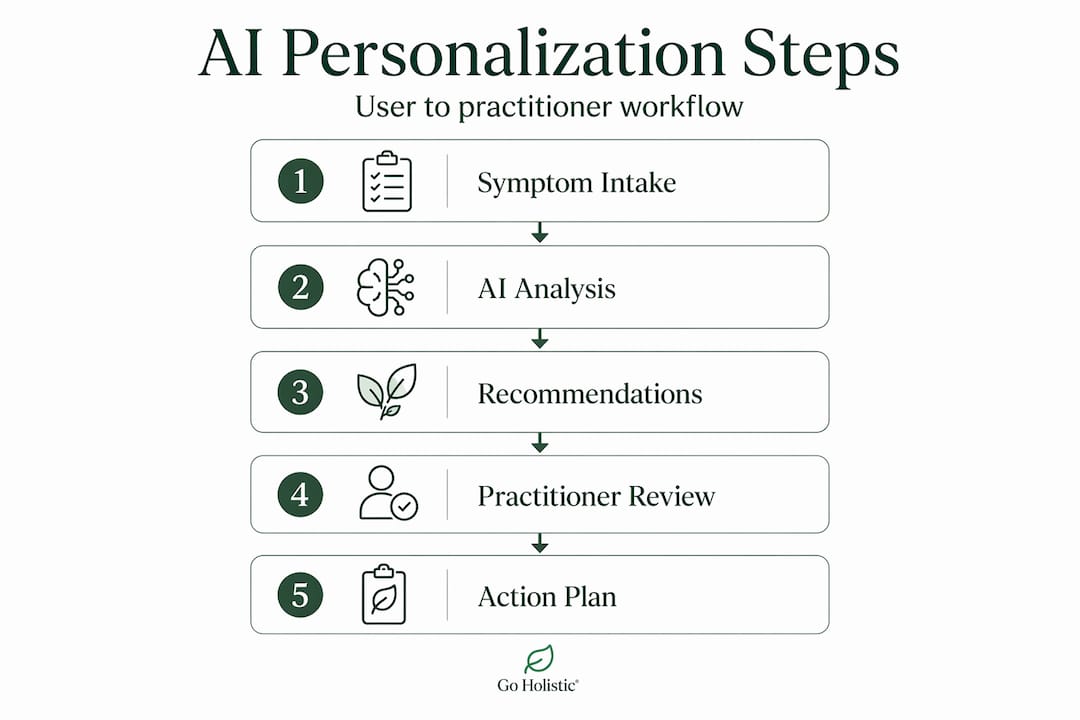

How AI enhances personalization and practitioner recommendations

This is where AI's promise becomes most concrete for people on a genuine wellness journey. Not the flashy promise of AI as a doctor replacement, but the practical value of AI as a thoughtful guide that helps you find the right human to support you.

Consider how this works in practice. You arrive at a wellness platform describing fatigue, digestive discomfort, and disrupted sleep. An AI system reads these inputs, cross-references them against a library of health patterns and evidence-based treatment associations, and returns a prioritized list of modalities worth exploring. Acupuncture. Nutritional therapy. Ayurveda. It flags that your symptom cluster has research support for these approaches and connects you to verified practitioners in your area.

Here is a quick look at how AI-supported roles compare with direct treatment roles:

| Function | AI-supported role | Human practitioner role |

|---|---|---|

| Symptom intake | Collects and organizes user input | Interprets context and nuance |

| Treatment matching | Suggests modalities based on patterns | Diagnoses and tailors a care plan |

| Progress tracking | Monitors metrics and flags changes | Evaluates clinical meaning |

| Emotional support | Offers guided prompts and resources | Provides empathy and therapeutic relationship |

| Referral | Routes to appropriate care type | Builds and maintains care relationships |

Clinically defensible AI practice follows a "support and escalation" model, where AI handles triage and data summarization while routing diagnosis and treatment to qualified clinicians. This is the model that protects users and builds long-term trust.

For your personalized wellness strategies, the takeaway is simple: AI that helps you find a great practitioner faster is doing exactly what it should.

- AI works best when it narrows your options intelligently, not when it makes final decisions

- Evidence-based practitioner matching reduces the trial-and-error that exhausts so many wellness seekers

- Platforms that combine AI triage with verified provider directories give you both speed and safety

Pro Tip: When using an AI-powered wellness platform, be as specific as possible about your health concerns. The more context you provide, the better the AI can match you with practitioners whose specialties align with your actual needs.

Leading successful AI integration: trust, transparency, and teamwork

Technology does not transform wellness platforms. People do. This is the insight most AI conversations skip over, and it is the most important one.

Research from Harvard Medical School on AI adoption in health settings found that sustainable success depends far more on trust, transparency, and frontline involvement than on the sophistication of the technology itself. In other words, an AI system that practitioners don't trust will be ignored. One that users don't understand will be abandoned.

The elements that determine whether AI actually works in a wellness context include:

- Practitioner buy-in: When practitioners are involved in designing and validating AI workflows, adoption improves and outcomes follow

- Clear communication with users: People need to know what the AI can and cannot do, stated plainly, not buried in terms of service

- Continuous feedback loops: Platforms that gather clinician and user feedback and act on it build better AI over time

- Cultural alignment: Organizations that approach AI as a tool for serving people, not replacing them, create the conditions for responsible AI use in wellness

"The most effective AI in health care is invisible in the best sense. It handles the background work well enough that practitioners can focus entirely on the person in front of them." This principle applies just as powerfully to wellness platforms as it does to hospitals.

Smart wellness technology does not announce itself. It simply makes your path to better health a little clearer, a little shorter, and a lot less overwhelming.

Why human-AI collaboration is the cornerstone, not replacement, in wellness platforms

Here is the editorial truth this guide has been building toward: the future of AI in wellness platforms is not artificial intelligence acting alone. It's the combination of machine pattern recognition and human wisdom that neither can offer on its own.

We have seen this pattern clearly enough to say it with confidence. AI tools that launch without clinical partnerships, ethical guardrails, or genuine user understanding tend to erode trust quickly. People sense when something is off, when a recommendation feels generic, when a response lacks the nuance their situation demands. That moment of doubt is expensive for any platform trying to build lasting relationships with wellness seekers.

The platforms getting this right are the ones that treat AI as an amplifier of human expertise, not a substitute for it. They use AI to surface patterns, personalize pathways, and make evidence more accessible. Then they hand the actual care to real people who can ask the follow-up question, notice the thing the data missed, and adjust when life doesn't fit the algorithm.

Balancing AI and human care is not just an ethical stance; it's the only approach that holds up over time. Users who feel genuinely supported, not just processed, keep coming back. Practitioners who feel empowered by their tools, not replaced by them, deliver better care. That's the ecosystem worth building.

The most important thing you can take from this article: be curious about AI in wellness, use it as the supportive tool it's designed to be, and always keep a human practitioner in the loop for anything that matters.

Explore AI-powered holistic health solutions with Go Holistic

If this guide has sparked your curiosity about how AI and human expertise can work together on your health journey, Go Holistic is designed exactly for that. The platform uses AI to analyze your health concerns and match you with evidence-based treatment options across more than 200 therapy types, from acupuncture to Ayurveda to massage therapy. You get personalized recommendations backed by research, not guesswork.

When you're ready to take the next step, you can explore holistic treatments across a wide range of alternative modalities, browse and filter verified holistic providers by specialty and location, and book directly without the runaround. Whether you're just beginning your wellness journey or looking to deepen it, finding your path starts with the right combination of smart tools and trusted practitioners. Get started today and let AI do the searching while you focus on healing.

Frequently asked questions

How does AI complement wellness platforms without replacing healthcare providers?

AI in wellness platforms primarily supports users by providing personalized information, triaging needs, and preparing them for provider visits, while treatment decisions remain with qualified clinicians. As Gallup research confirms, AI functions as an information and preparation layer around medical visits, helping people clarify questions and understand their care plans.

What are the main risks of using AI in wellness platforms?

Key risks include potential misdiagnosis, overreliance on AI advice, privacy concerns, and patient anxiety. Responsible deployment and meaningful guardrails are just as critical as AI capability in managing these risks effectively.

Can AI-driven mental health tools replace traditional therapy?

AI tools serve as adjunct supports that improve access and monitoring but should not replace therapy, requiring professional supervision and ethical data handling. Clinical evidence confirms AI holds real promise as a supportive tool, but full delegation to AI should be avoided.

How do wellness platforms ensure AI recommendations are safe and trustworthy?

Platforms implement governance protocols like auditability, informed consent, and human-in-the-loop review to validate AI outputs and protect users. Consumer protection guidance specifically mandates these governance details as baseline requirements for responsible AI deployment.

What factors influence successful AI adoption in healthcare and wellness settings?

Successful AI adoption depends on trust, transparency, clinical workflow integration, stakeholder involvement, and an organizational culture that prioritizes people first. Harvard Medical School research confirms that sustainable AI success relies on frontline involvement and shared trust far more than technical sophistication alone.